How to Choose a Survey3 Camera Model

Choosing a Lens Option for Survey3:

We offer 2 different lenses for the Survey3 cameras: Survey3W (Wide) and Survey3N (Narrow). The terms wide and narrow refer to the lens' angle of view.

Camera Model |

Horizontal Angle of View |

35mm Equivalent Focal Length |

Default Minimum Focus Distance (Infinity) |

| Survey3W | 87 degrees | 19mm | 32.07in (81.46cm) |

| Survey3N | 41 degrees | 47mm | 178.97in (454.58cm) |

You can adjust the lens focus distance by removing the green Survey3 faceplate and turning the lens. As you bring the focus closer there will be a new maximum focus distance. If you need assistance in calculating the adjusted focus range please contact us.

Most of the time you will want to choose the wider angle Survey3W, as it is easier to use due to the wider angle of view. If you are looking for the "best" lens, the one with the sharpest glass, least vignette and least distortion, then the narrower Survey3N is your best choice.

Use our camera lens calculator to determine which lens model will work best for you.

Choosing a Filter Option for Survey3:

We offer 6 different filters for the Survey3 cameras: RGB, RGN, OCN, NGB, RE, and NIR.

Camera Filter Model |

Image Channels 1,2,3 |

Spectrum Peaks |

| RGN | Red, Green, Near Infrared (NIR) | 660nm, 550nm, 850nm |

| OCN | Orange, Cyan, Near Infrared (NIR) | 615nm, 490nm, 808nm |

| NGB | Near Infrared (NIR), Green, Blue | 850nm, 550nm, 475nm |

| RE | Red Edge | 725nm |

| NIR | Near Infrared (NIR) | 850nm |

Spectrum Peak |

Spectrum Width |

Filter |

Channel |

| 475nm | 15nm | NGB | 3 |

| 490nm | 36nm | OCN | 2 |

| 550nm | 15nm | NGB, RGN | 2 |

| 615nm | 42nm | OCN | 1 |

| 660nm | 15nm | RGN | 1 |

| 725nm | 23nm | RE | 1 |

| 808nm | 50mm | OCN | 3 |

| 850nm | 30mm | NGB, RGN, NIR | 1,3,1 |

OCN Filter

(Orange + Cyan + NIR):

Image Channel 1 = Orange Light

Image Channel 2 = Cyan (Blue/Green) Light

Image Channel 3 = NIR (Near Infrared) Light

The OCN filter is similar to the RGN, both can perform the NDVI index calculation, but often times the OCN provides increased contrast within vegetation and reduces soil noise. It is better to use the OCN if there is a lot of soil among your vegetation, and the RGN if the crop has more of a solid canopy (low number of soil pixels). Please read HERE and HERE for more information about how the RGN and OCN compare.

RGN Filter

(Red + Green + NIR):

Image Channel 1 = Red Light

Image Channel 2 = Green Light

Image Channel 3 = NIR (Near Infrared) Light

The RGN filter is our most commonly purchased model mainly due to its ability to capture the Red and NIR wavelengths necessary for the popular NDVI index (see below for more information). NDVI is typically used as a general plant health and vigor index, basically it will show you what regions are healthiest compared to those areas that are not as healthy. Please read HERE and HERE for more information about how the RGN and OCN compare.

NGB Filter

(NIR + Green + Blue):

Image Channel 1 = NIR (Near Infrared) Light

Image Channel 2 = Green Light

Image Channel 3 = Blue Light

The NGB filter is often times used for the ENDVI index, basically Enhanced NDVI. The NGB filter is also commonly used when imaging water or vegetation in/under water since it captures the blue spectrum. Many plants reflect blue and blue-green (cyan), so you may want to compare the results between the NGB and OCN filter models.

RedEdge Filter (RE):

Image Channel 1 = RedEdge (725nm) Light

Image Channel 2 = Not Used

Image Channel 3 = Not Used

The RedEdge (RE) filter is used to capture a single band of reflected light in the region known as the rededge. This region from about 700-800nm is where plants have varying reflectance which closely relates to their health. A plant reflecting more rededge light will typically be more healthy than a plant that is not. When processed with our MCC application, the output images will be a single image band, meaning black and white. A white pixel will be high rededge reflectance, and a black pixel low rededge reflectance. You can disregard the 2nd and 3rd image channels as they will not contain useful data compared to the red channel.

Near Infrared Filter (NIR):

Image Channel 1 = Near Infrared (850nm) Light

Image Channel 2 = Not Used

Image Channel 3 = Not Used

The near infrared (NIR) filter is used to capture a single band of reflected near infrared light. When processed with our MCC application, the output images will be a single image band, meaning black and white. A white pixel will be high NIR reflectance, and a black pixel low NIR reflectance. You can disregard the 2nd and 3rd image channels as they will not contain useful data compared to the red channel.

RGB Filter (Red + Green + Blue):

Image Channel 1 = Red Light

Image Channel 2 = Green Light

Image Channel 3 = Blue Light

The RGB filter is the typical filter that is installed in most cameras, which allows color light just like our eyes see the world to be captured. RGB cameras are commonly used along with multispectral ones to provide a reference image to the viewer. This reference is often times necessary to relate what our eyes see to what a camera capable of capturing near infrared (NIR) light sees.

Multispectral Index Formulas

After the images are captured by the OCN, RGN, NGB, RE and NIR model cameras they should be calibrated in MCC for percent reflectance using images of our Calibration Targets and the log from the ambient light sensor (DAQ-A-SD). Once calibrated the images can be stitched in the photogrammetry program of your choice. You can also upload to our MAPIR Cloud directly from the camera without needing to process in MCC.

Many of these applications provide what is called a raster/index calculator, that performs math on the image's pixels. The pixel values that result after computing the index represent a pixel range dependent on the index and what it is calculating. Many programs make this calculation a one-button process, but let's explain it in a little more detail:

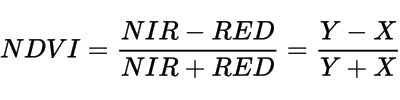

Let's use the RGN filter as an example and compute the popular NDVI index:

As you can see in the formula above the NDVI index uses the NIR and RED light. So for the RGN filter camera models, that would be the 3rd image channel (NIR) and the 1st image channel (RED).

The processing program will take the pixel value in the 1st and 3rd image channels and plug it into the above equation. The resulting pixels will then all have a value ranging from -1 to +1. For plants, the NDVI values of the actual plants range from about 0.2 to 0.8. We then apply a color lut to the pixels so that our eyes can more easily interpret the data. The color lut is the green to yellow to red (high health to low health) colors you may have seen, such as in the below animation of RGB vs RGN (NDVI).